Psychological Process

Research Team

Research Summary

We conduct research to elucidate the computational mechanisms of human mind (emotions, cognition, and behavior) to develop robots with mind.

- Main Research Fields

-

- Experimental psychology

- Basic/Social brain science

- Intelligent robotics

- Keywords

-

- Emotion

- Facial expression

- Social interaction

- Human-robot interaction

- Neuroimaging

- Research theme

-

- Computational elucidation of human mind; its implementation in robots collaborating with engineers.

- Psychological evaluation of robots' functions.

- Interdisciplinary research across psychology, informatics, and robotics, especially on emotional communication.

Wataru Sato

History

- 2005

- Primate Research Institute, Kyoto University

- 2010

- Hakubi Center, Kyoto University

- 2014

- Graduate School of Medicine, Kyoto University

- 2017

- Kokoro Research Center, Kyoto University

- 2020

- RIKEN

Award

- 2011

- Award for Distinguished Early and Middle Career Contributions, The Japanese Psychological Association

Members

- Chun-Ting Hsu

- Research Scientist

- Shushi Namba

- Research Scientist

- Koh Shimokawa

- Technical Staff I

- Junyao Zhang

- Postdoctoral Researcher

- Shinya Nishida

- Senior Visiting Scientist

- Masaru Usami

- Visiting Technician

- Sakiyama Megumi

- Research Part-time Worker II

Former member

- Akie Saito

- Research Scientist(2020/06-2024/03)

- Saori Namba

- Research Part-time Worker I(2021/04-2023/03)

- Rena Kato

- Research Part-time Worker II(2020/07-2022/01)

- Naoya Kawamura

- Research Part-time Worker II and Student Trainee(2022/06-2025/03)

- Dongsheng Yang

- Student Trainee(2022/04-2025/03)

- Anna Kelbakh

- Student Trainee(2024/07-2025/03)

- Budu Tang

- Student Trainee(2023/05-2026/03)

Research results

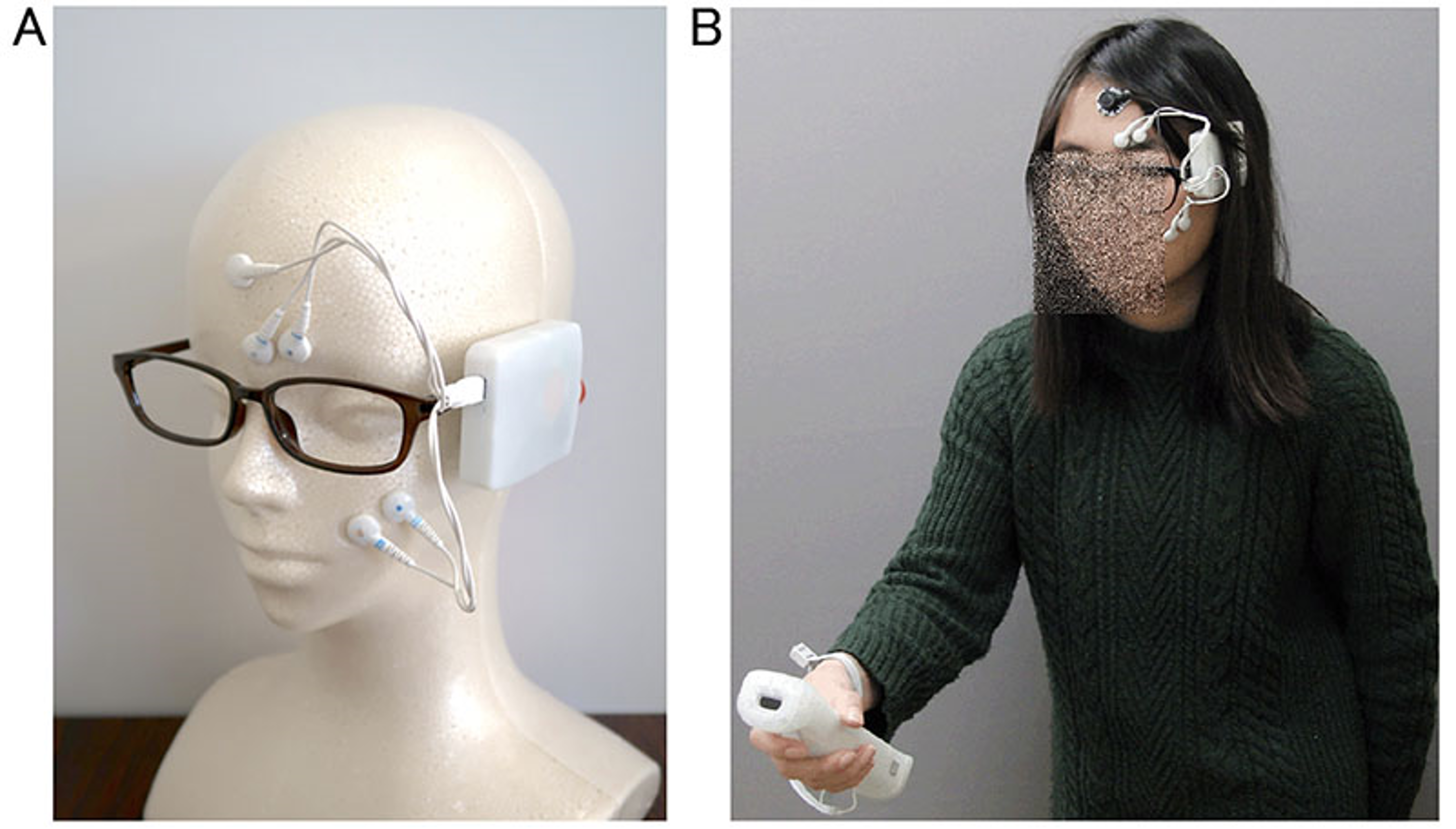

Emotional valence sensing using a wearable facial EMG device

(Sato, Murata, Uraoka, Shibata, Yoshikawa, & Furuta: Sci Rep)

Emotion sensing using physiological signals in real-life situations can be practically valuable. Previous studies developed wearable devices that record autonomic nervous system activity, which reflects emotional arousal. However, no study has determined whether emotional valence can be assessed using wearable devices.

To this end, we developed a wearable device to record facial electromyography (EMG) from the corrugator supercilii (CS) and zygomatic major (ZM) muscles.

To validate the device, in Experiment 1, we used a traditional wired device and our wearable device, to record participants' facial EMG while they were viewing emotional films.

Participants viewed the films again and continuously rated their recalled subjective valence during the first viewing. The facial EMG signals recorded using both wired and wearable devices showed that CS and ZM activities were, respectively, negatively and positively correlated with continuous valence ratings.

In Experiment 2, we used the wearable device to record participants' facial EMG while they were playing Wii Bowling games and assessed their cued-recall continuous valence ratings. CS and ZM activities were correlated negatively and positively, respectively, with continuous valence ratings.

These data suggest the possibility that facial EMG signals recorded by a wearable device can be used to assess subjective emotional valence in future naturalistic studies.

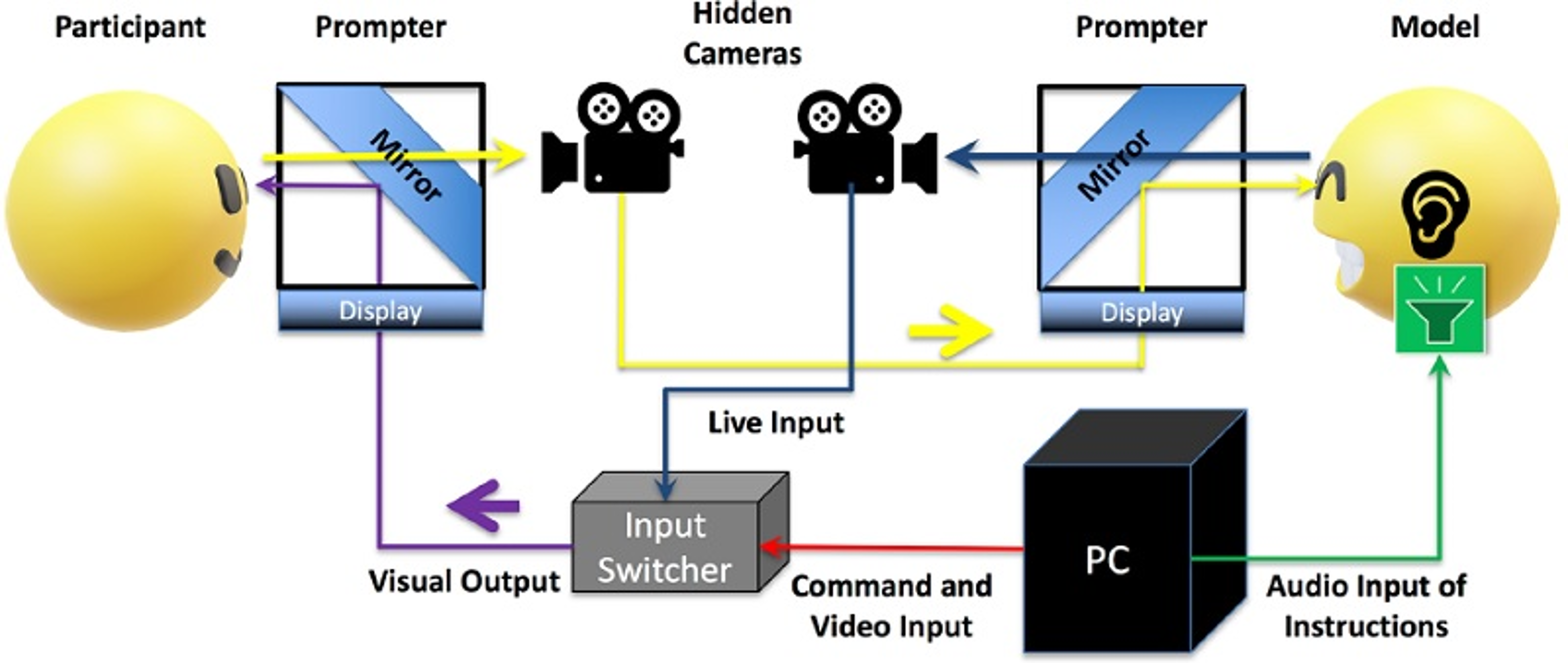

Enhanced emotional and motor responses to live vs. videotaped dynamic facial

(Hsu, Sato, & Yoshikawa: Sci Rep)

Facial expression is an integral aspect of non-verbal communication of affective information. Earlier psychological studies have reported that the presentation of prerecorded photographs or videos of emotional facial expressions automatically elicits divergent responses, such as emotions and facial mimicry.

However, such highly controlled experimental procedures may lack the vividness of reallife social interactions.

This study incorporated a live image relay system that delivered models’ real-time performance of positive (smiling) and negative (frowning) dynamic facial expressions or their prerecorded videos to participants. We measured subjective ratings of valence and arousal and facial electromyography (EMG) activity in the zygomaticus major and corrugator supercilii muscles.

Subjective ratings showed that the live facial expressions were rated to elicit higher valence and more arousing than the corresponding videos for positive emotion conditions. Facial EMG data showed that compared with the video, live facial expressions more effectively elicited facial muscular activity congruent with the models’ positive facial expressions.

The findings indicate that emotional facial expressions in live social interactions are more evocative of emotional reactions and facial mimicry than earlier experimental data have suggested.

Selected Publications

- Hsu, C.-T., Kelbakh, A., Yang, D., Minato, T., & Sato, W. (2026). Multivariate timing and Granger causality analysis of spontaneous facial mimicry in response to android dynamic facial expressions. Sensors, 26, 1881.

- Tang, B., & Sato, W. (2026). Machine learning-based ear thermal imaging for emotion sensing. Sensors, 26, 1248.

- Howlett, P., Baysu, G., Jungert, T., Atkinson, A. P., Namba, S., Sato, W., Mizuno, K., & Rychlowska, M. (2026). Friendships are more group-oriented in the UK than in Japan. British Journal of Social Psychology, 65, e70040.

- Hsu, C.-T., Minato, T., Shimokawa, K., & Sato, W. (2026). Receiving human and android facial mimicry induces empathy experiences and oxytocin release. Computers in Human Behavior Reports, 21, 100895.

- Sato, W.*, Kochiyama, T.*, Abe, N., Asano, K., & Yoshikawa, S. (* equal contributors) (2026). Neural network dynamics associated with facial and subjective emotional responses. Communications Biology, 9, 91.

- Tang, B., & Sato, W. (2025). Ear thermal imaging for emotion sensing. Scientific Reports, 15, 41571

- Yang, D., Sato, W., Hsu, C.-T., Minato, T., & Nishida, S. (2025). Visually detectable facial mimicry in response to android facial expressions. Scientific Reports, 15, 41376

- Yamamoto, T., Tanaka, R., Kochiyama, T., Nota, Y., Tsuchiya, H., Kawamoto, M., & Sato, W. (2025). Pleasantness emotions and neural activity induced by the multimodal crispiness of monaka ice cream. Frontiers in Nutrition, 12, 1681999.

- Sato, W., Shimokawa, K., Namba, S., & Minato, T. (2026). Multimodal advantage in android emotional expressions. International Journal of Social Robotics, 18, 8.

- Tang, B., Sato, W., & Kawanishi, Y. (2025). Development of machine-learning-based facial thermal image analysis for dynamic emotion sensing. Sensors, 25, 5276.

- Tang, B., Sato, W., Shimokawa, K., Hsu, C.-T., & Kochiyama, T. (2025). Development of pixel-based facial thermal image analysis for emotion sensing. Computers in Human Behavior Reports, 19, 100761.

- Sato, W., Shimokawa, K., & Minato, T. (2025). Exploration of Mehrabian’s communication model with an android. Scientific Reports, 15, 25986.

- Sato, W., Uono, S., & Kochiyama, T. (2025). Benton facial recognition test scores for predicting fusiform gyrus activity during face perception. Scientific Reports, 15, 22507.

- Namba, S., Saito, A., & Sato, W. (Chapter; 2025). Computational analysis of value learning and value-driven detection of neutral faces by young and older adults. In Dolcos, F., Kemp, A., & Hamm, A. O. (eds.). Insights in emotion science. Frontiers Media SA: Lausanne

- Sato, W., Ishihara, S., Ikegami, A., Kono, M., Nakauma, M., & Funami, T. (2025). Dynamic concordance between subjective and facial EMG hedonic responses during the consumption of gel-type food. Current Research in Food Science.

- Sato, W.*, Kochiyama, T.*, & Uono, S. (* equal contributors) (2025). Neural electrical correlates of subjective happiness. Human Brain Mapping, 46, e70224.

- Nomiya, H., Shimokawa, K., Namba, S., Osumi, M., & Sato, W. (2025). An artificial intelligence model for sensing affective valence and arousal from facial images. Sensors, 25, 1188.

- Diel, A., Sato, W., Hsu, C.-T., Bäuerle, A., Teufel, M., & Minato, T. (2025). An android can show the facial expressions of complex emotions. Scientific Reports, 15, 2433.

- Sato, W., Shimokawa, K., Uono, S., & Minato, T. (2024). Mentalistic attention orienting triggered by android eyes. Scientific Reports, 14, 23143.

- Sato, W., & Saito, A. (2024). Weak subjective–facial coherence as a possible emotional coping in older adults. Frontiers in Psychology, 15, 1417609.

- Namba, S., Sato, W., Namba, S., Diel, A., Ishi, C., & Minato, T. (2024). How an android expresses “now loading…”: Examining the properties of thinking faces. International Journal of Social Robotics, 16, 1861-1877.

- Zhang, J., Sato, W., Kawamura, N., Shimokawa, K., Tang, B., & Nakamura, Y. (2024). Sensing emotional valence and arousal dynamics through automated facial action unit analysis. Scientific Reports, 14, 19563.

- Sato, W. (2024). Advancements in sensors and analyses for emotion sensing. Sensors, 24, 4166.

- Namba, S., Saito, A., & Sato, W. (2024). Computational analysis of value learning and value-driven detection of neutral faces by young and older adults. Frontiers in Psychology, 15, 1281857.

- Kawamura, N., Sato, W., Shimokawa, K., Fujita, T., & Kawanishi, Y. (2024). Machine learning-based interpretable modeling for subjective emotional dynamics sensing using facial EMG. Sensors, 24, 1536.

- Sato, W., Usui, N., Kondo, A., Kubota, Y., Toichi, M., & Inoue, Y. (2024). Impairment of unconscious emotional processing after unilateral medial temporal structure resection. Scientific Reports, 14, 4269.

- Ishikura, T., Sato, W., Takamatsu, J., Yuguchi, A., Cho, S.-G., Ding, M., Yoshikawa, S., & Ogasawara, T. (2024). Delivery of pleasant stroke touch via robot in older adults. Frontiers in Psychology, 14, 1292178.

- Schiller, D. et al. (2024). The Human Affectome. Neuroscience and Biobehavioral Reviews, 158, 105450.

- Huggins, C. F., Williams, J. H. G., & Sato, W. (2023). Cross-cultural differences in self-reported and behavioural emotional self-awareness between Japan and the UK. BMC Research Notes, 16, 380.

- Namba, S., Sato, W., Namba, S., Nomiya, H., Shimokawa, K., & Osumi, M. (2023). Development of the RIKEN database for dynamic facial expressions with multiple angles. Scientific Reports, 13, 21785.

- Diel, A., Sato, W., Hsu, C.-T., & Minato, T. (2023). Asynchrony enhances uncanniness in human, android, and virtual dynamic facial expressions. BMC Research Notes, 16, 368.

- Sato, W., & Yoshikawa, S. (2023). Influence of stimulus manipulation on conscious awareness of emotional facial expressions in the match-to-sample paradigm. Scientific Reports, 13, 20727.

- Hsu, C.-T., & Sato, W. (2023). Electromyographic validation of spontaneous facial mimicry detection using automated facial action coding. Sensors, 23, 9076.

- Saito, A., Sato, W., & Yoshikawa, S. (2023). Sex differences in the rapid detection of neutral faces associated with emotional value. Biology of Sex Differences, 14, 84.

- Saito, A., Sato, W., & Yoshikawa, S. (2023). Autistic traits modulate the rapid detection of punishment-associated neutral faces. Frontiers in Psychology, 14, 1284739.

- Diel, A., Sato, W., Hsu, C.-T., & Minato, T. (2023). Differences in configural processing for human vs. android dynamic facial expressions. Scientific Reports, 13, 16952.

- Hsu, C.-T., Sato, W., & Yoshikawa, S. (2024). An investigation of the modulatory effects of empathic and autistic traits on emotional and facial motor responses during live social interactions. PLoS One, 19, e0290765.

- Saito, A., Sato, W., & Yoshikawa, S. (2024). Altered emotional mind-body coherence in older adults. Emotion, 24, 15-26.

- Diel, A., Sato, W., Hsu, C.-T., & Minato, T. (2023). The inversion effect on the cubic humanness-uncanniness relation in humanlike agents. Frontiers in Psychology, 14, 1222279.

- Sato, W., Nakazawa, A., Yoshikawa, S., Kochiyama, T., Honda, M., & Gineste, Y. (2023). Behavioral and neural underpinnings of empathic characteristics in a Humanitude-care expert. Frontiers in Medicine, 10, 1059203.

- Sato, W., & Kochiyama, T. (2023). Crosstalk in facial EMG and its reduction using ICA. Sensors, 23, 2720.

- Sato, W.*, Kochiyama, T.*, & Yoshikawa, S. (* equal contributors) (2023). The widespread action observation/execution matching system for facial expression processing. Human Brain Mapping, 44, 3057-3071.

- Ishikura, T., Yuki, K., Sato, W., Takamatsu, J., Yuguchi, A., Cho, S. G.. Ding, M., Yoshikawa, S., & Ogasawara, T. (2023). Pleasant stroke touch on human back by a human and a robot. Sensors, 23, 1136.

- Sato, W. (2023). The neurocognitive mechanisms of unconscious emotional responses. In: Boggio, P. S., Wingenbach, T. S. H., da Silveira Coelho, M. L., Comfort, W. E., Marques, L. M., & Alves, M. V. C. (eds.). Social and affective neuroscience of everyday human interaction: From theory to methodology. Springer. pp. 23-36.

- Yang, D., Sato, W., Liu, Q., Minato, T., Namba, S., & Nishida, S. (2022). Optimizing facial expressions of an android robot effectively: A Bayesian optimization approach. 2022 IEEE-RAS 21st International Conference on Humanoid Robots (Humanoids), 542-549.

- Saito, A., Sato, W., Ikegami, A., Ishihara, S., Nakauma, M., Funami, T., Fushiki, T., & Yoshikawa, S. (2022). Subjective-physiological coherence during food consumption in older adults. Nutrients, 14, 4736.

- Sato, W., Uono, S., & Kochiyama, T. (2022). Neurocognitive mechanisms underlying social atypicalities in autism: Weak amygdala's emotional modulation hypothesis. In: Halayem, S., Amado, I. R., Bouden, A., & Leventhal, B. (eds.). Advances in social cognition assessment and intervention in autism spectrum disorder. Frontiers Media. pp. 32-40.

- Hsu, C.-T., Sato, W., Kochiyama, T., Nakai, R., Asano, K., Abe, N., & Yoshikawa, S. (2022). Enhanced mirror neuron network activity and effective connectivity during live interaction among female subjects.Neuroimage, 263, 119655.

- Sato, W., & Kochiyama, T. (2022). Exploration of emotion dynamics sensing using trapezius EMG and fingertip temperature. Sensors, 22, 6553.

- Sawada, R., Sato, W., Nakashima, R., & Kumada, T. (2022). How are emotional facial expressions detected rapidly and accurately? A diffusion model analysis. Cognition, 229, 105235.

- Namba, S., Sato, W., Nakamura, K., & Watanabe, K. (2022). Computational process of sharing emotion: An authentic information perspective. Frontiers in Psychology, 13, 849499.

- Sawabe, T., Honda, S., Sato, W., Ishikura, T., Kanbara, M., Yoshikawa, S., Fujimoto, Y., & Kato, H. (2022). Robot touch with speech boosts positive emotions. Scientific Reports, 12, 6884.

- Namba, S., Sato, W., & Matsui, H. (in press). Spatio-temporal properties of amused, embarrassed and pained smiles. Journal of Nonverbal Behavior.

- Saito, A., Sato, W., & Yoshikawa, S.(2022). Rapid detection of neutral faces associated with emotional value among older adults. Journal of Gerontology: Psychological Sciences, 77, 1219-1228.

- Sato, W., Namba, S., Yang, D., Nishida, S., Ishi, C., & Minato, T.(2022). An android for emotional interaction: Spatiotemporal validation of its facial expressions. Frontiers in Psychology, 12, 800657.

- Uono, S., Sato, W., Kochiyama, T., Yoshimura, S., Sawada, R., Kubota, Y., Sakihama, M., & Toichi, M.(2022). The structural neural correlates of atypical facial expression recognition in autism spectrum disorder. Brain Imaging and Behavior, 16, 1428-1440.

- Saito, A., Sato, W., & Yoshikawa, S.(2022). Rapid detection of neutral faces associated with emotional value. Cognition and Emotion, 36, 546-559.

- Sato, W., Ikegami, A., Ishihara, S., Nakauma, M., Funami, T., Yoshikawa, S., & Fushiki, T.(2021). Brow and masticatory muscle activity senses subjective hedonic experiences during food consumption. Nutrients, 13, 4216.

- Namba, S., Sato, W., & Yoshikawa, S.(2021). Viewpoint robustness of automated facial action unit detection systems. Applied Sciences, 11, 11171.

- Sato, W.(2021). Color's indispensable role in the rapid detection of food. Frontiers in Psychology, 12, 753654.

- Uono, S., Sato, W., Sawada, R., Kawakami, S., Yoshimura, S., & Toichi, M.(2021). Schizotypy is associated with difficulties detecting emotional facial expressions. Royal Society Open Science, 8, 211322.

- Sato, W., Usui, N., Sawada, R., Kondo, A., Toichi, M., & Inoue, Y. (2021). Impairment of emotional expression detection after unilateral medial temporal structure resection. Scientific Reports, 11, 20617.

- Huggins, C., Cameron, I.M., Scott, N.W., Williams, J.H., Yoshikawa, S., & Sato, W. (2021). Cross-cultural differences and psychometric properties of the Japanese Actions and Feelings Questionnaire (J-AFQ). Frontiers in Psychology, 12, 722108.

- Namba, S., Sato, W., Osumi, M., & Shimokawa, K. (2021). Assessing automated facial action unit detection systems for analyzing cross-domain facial expression databases. Sensors, 21, 4222.

- Zickfeld, J.H., van de Ven, N., Pich, O., Schubert, T.W., Berkessel, J.B., Pizarro, J.J., Bhushan, B., Mateo, N.J., Barbosa, S., Sharman, L., K ökönyei, G., Schrover, E., Kardum, I., Aruta, J.J.B., Lazarevic, L.B., Escobar, M.J., Stadel, M., Arriaga, P., Dodaj, A., Shankland, R., Majeed, N.M., Li, Y., Lekkou, E., Hartanto, A., Özdoğru, A.A., Vaughn, L.A., Espinozay, M.C., Caballero, A., Kolen, A., Karsten, J., Manley, H., Maeura, N., Eşkisu, M., Shani, Y., Chittham, P., Ferreira, D., Bavolar, J., Konova, I., Sato, W., Morvinski, C., Carrera, P., Villar, S., Ibanez, A., Hareli, S., Garcia, A.M., Kremer, I., Götz, F.M., Schwerdtfeger, A., Estrada-Mejia, C., Nakayama, M., Ng, W.Q., Sesar, K., Orjiakor, C., Dumont, K., Allred, T.B., Gračanin, A., Rentfrow, P.J., Sch önefeld, V., Vally, Z., Barzykowski, K., Peltola, H.R., Tcherkassof, A., Haque, S., Śmieja, M., Su-May, T.T., IJzerman, H., Vatakis, A., Ong, C.W., Choi, E., Schorch, S.L., Páez, D., Malik, S., Kačmár, P., Bobowik, M., Jose, P., Vuoskoski, J., Basabe, N., Doğan, U., Ebert, T., Uchida, Y., Zheng, M.X., Mefoh, P., Šebeňa, R., Stanke, F.A., Ballada, C.J., Blaut, A., Wu, Y., Daniels, J.K., Kocsel, N., Burak, E.G.D., Balt, N.F., Vanman, E., Stewart, S.L.K., Verschuere, B., Sikka, P., Boudesseul, J., Martins, D., Nussinson, R., Ito, K., Mentser, S., Çolak, T.S., Martinez-Zelaya, C., & Vingerhoets, A. (2021). Tears evoke the intention to offer social support: A systematic investigation of the interpersonal effects of emotional crying across 41 countries. Journal of Experiment Social Psychology, 95, 104137.

- Nishimura, S., Nakamura, T., Sato, W., Kanbara, M., Fujimoto, Y., Kato, H., & Hagita, N. (2021). Vocal synchrony of robots boosts positive affective empathy. Applied Sciences, 11, 2502.

- Sato, W., Murata, K., Uraoka, Y., Shibata, K., Yoshikawa, S., & Furuta, M. (2021). Emotional valence sensing using a wearable facial EMG device. Scientific Reports, 11, 5757.

- Sato, W., Yoshikawa, S., & Fushiki, T. (2021). Facial EMG activity is associated with hedonic experiences but not nutritional values while viewing food images. Nutrients, 13, 11.

- Nishimura, S., Kimata, D., Sato, W., Kanbara, M., Fujimoto, Y., Kato, H., & Hagita, N. (2020). Positive emotion amplification by representing excitement scene with TV chat agents. Sensors, 20, 7330.

- Sato, W., Kochiyama, T., & Yoshikawa, S. (2020). Physiological correlates of subjective emotional valence and arousal dynamics while viewing films. Biological Psychology, 157, 107974.

- Sato, W., Rymarczyk, K., Minemoto, K., & Hyniewska, S. (2020). Cultural differences in food detection. Scientific Reports, 10, 17285.

- Hsu, C.-T., Sato, W., & Yoshikawa, S.(2020). Enhanced emotional and motor responses to live vs.videotaped dynamic facial expressions. Scientific Reports, 10, 16825.

- Sato, W., Uono, S., & Kochiyama, T. (2020). Neurocognitive mechanisms underlying social atypicalities in autism: Weak amygdala’s emotional modulation hypothesis. Frontiers in Psychiatry, 11, 864.

- Sato, W. (2020). Association between dieting failure and unconscious hedonic responses to food. Frontiers in Psychology, 11, 2089.

- Sato, W., Minemoto, K., Sawada, R., Miyazaki, Y., & Fushiki, T. (2020). Image database of Japanese food samples with nutrition information. PeerJ, 8, e9206.

- Sato, W., Minemoto, K., Ikegami, A., Nakauma, M., Funami, T., & Fushiki, T. (2020). Facial EMG correlates of subjective hedonic responses during food consumption. Nutrients, 12, 1174.

- Saito, A., Sato, W., & Yoshikawa, S. (2020). Older adults detect happy facial expressions less rapidly. Royal Society Open Science, 7, 191715.

- Williams, J., Huggins, C., Zupan, B., Willis, M., Van Rheenen, T., Sato, W., Palermo, R., Ortner, C., Krippl, M., Kret, M., Dickson, J., Li, C S R., & Lowe, L. (2020). A sensorimotor control framework for understanding emotional communication and regulation. Neuroscience and Biobehavioral Reviews, 112, 503-518.

- Sato, W.*, Kochiyama, T.*, Uono, S., Sawada, R., & Yoshikawa, S.(* equal contributors) (2020). Amygdala activity related to perceived social support. Scientific Reports, 10, 2951.

- Kong, F., Heller, A.S., van Reekum, C.M., & Sato, W. (2020). Editorial: Positive neuroscience: the neuroscience of human flourishing. Frontiers in Human Neuroscience, 14, 47.

Links

Psychological Process Research Team(RIKEN)

Contact Information

wataru.sato.ya [at] riken.jp