Knowledge Acquisition and Dialogue

Research Team

Research Summary

Communication through language is an essential tool for robots to cooperate with users in daily lives. This research team focuses on modeling intention estimation, thinking, and inference on verbal and non-verbal communications. We are working on the following topics: action management of dialogue robots, spoken dialogue related technologies modeling thinking, inference, and topic selection of robots knowledge expressions grounded on robot behaviors and dialogue contents incorporating emotion and language information empathy, emotion, and trust in dialogue.

- Main Research Fields

-

- Natural language processing

- Spoken language processing

- Intelligence information processing

- Keywords

-

- Spoken Language Processing (Recognition and Dialogue)

- Natural Language Processing (Understanding, Generation)

- Multimodal Information Processing

- Intelligent Robot Dialogue

- Machine Learning (Reinforcement Learning, Quantum Machine Learning)

- Research theme

- Environment understanding from verbal and non-verbal information

- Inference on knowledge

- Memory representation of experiments and knowledges

- Action decision

- Dialogue response generation

- Robot action generation

Koichiro Yoshino

History

- 2009

- Bachelor of Arts, Keio University

- 2011

- Master of Informatics, Kyoto University

- 2014

- Ph.D. Informatics, Kyoto University

- 2014

- JSPS Research Fellow (PD), ACCMS, Kyoto University

- 2015

- Project Assistant Professor, Graduate School of Information Science, NAIST

- 2016

- Assistant Professor, Graduate School off Information Science, NAIST

- 2016

- PRESTO Researcher, JST

- 2020

- Team Leader, GRP, RIKEN

He is a Visiting Researcher at AIP, RIKEN from 2017. From 2019 to 2020, he was a visitor researcher of HHU Düsseldorf, Germany.

Award

- 2013

- SIG Research Award, The Japanese Society for Artificial Intelligence

- 2018

- Outstanding Journal Paper Award, The Association for Natural Language Processing

- 2019

- Best Paper Award, ACL2019 NLP for Conversational AI Workshop

- 2020

- Outstanding Paper Award, The Association for Natural Language Processing

- 2020

- SIG Research Award, The Japanese Society for Artificial Intelligence

- 2020

- Best Paper Award, IWSDS2020

Members

- Canasai KRUENGKRAI

- Research Scientist

- Angel Garcia contreras

- Postdoctoral Researcher

- Hideko Habe

- Technical Staff I

- Yoko Matsui

- Technical Staff I

- Tatsuya Kawahara

- Senior Visiting Scientist

- Yusuke Oda

- Visiting Scientist

- Seiya Kawano

- Visiting Scientist

- Shun Inazumi

- Junior Research Associate and Student Trainee

- Kazuyo Onishi

- Junior Research Associate and Student Trainee

- Hyuga Nakaguro

- Junior Research Associate and Student Trainee

- Kai Yoshida

- Student Trainee

- Takuma miwa

- Student Trainee

- Ryuto Okuno

- Administrative Part-time Worker II

- Muhammad Yeza Baihaqi

- Student Trainee

- Onaka Hien

- Student Trainee

- Komatsu Shusuke

- Student Trainee

- Mori Kiyotada

- Student Trainee

- Sato Takuma

- Student Trainee

- Kazuki Yamauchi

- Student Trainee

Former member

- Kana Miyamoto

- Junior Research Associate(2022/04-2022-05)

- Shohei Tanaka

- Junior Research Associate(2021/04-2023/03)

- Seitaro Shinagawa

- Visiting Scientist(2022/5~2024/4/30)

- Akishige Yuguchi

- Visiting Scientist(2021/04-2025/03)

- Muteki Arioka

- Research Part-time Worker I(2020/08-2022/03)

- Lee Sangmyeong

- Research Part-time Worker I and Student Trainee(2024/05-06)

- Daichi Yoshihara

- Research Part-time Worker II and Student Trainee(2022/05-2023/03)

- Konosuke Yamasaki

- Research Part-time Worker II and Student Trainee(2022/06-2024/03)

- Kanta Watanabe

- Research Part-time Worker II and Student Trainee(2022/06-2024/03)

- Mao Yamaguchi

- Administrative Part-time Worker II(2023/05-2025/03)

- Liu Qianying

- Student Trainee(2021/04-2022/03)

- Taiki Nakamura

- Student Trainee(2021/08-2023/03)

- Yasutaka Odo

- Student Trainee(2022/08-2022/09)

- Kenta Yamamoto

- Student Trainee(2021/08-2023/03)

- Nobuhiro Ueda

- Student Trainee(2021/08-2024/03)

- Shota Kanezaki

- Student Trainee(2021/12-2024/03)

- Ryuichi Sumida

- Student Trainee(2023/8-2024/03)

- Kataoka Shotaro

- Student Trainee(2023/9-2024/03)

- Mizoguchi Tsukito

- Student Trainee(2023/9-2024/03)

- Shang-Chi Tsai

- Student Trainee(2023/11-2024/03)

- Takahiro Hiura

- Student Trainee(2023/05-2025/03)

- Kazuma Fujita

- Student Trainee(2023/05-2025/03)

- Nakaso yoshihiro

- Student Trainee(2023/11-2025/03)

- Rino Naka

- Research Intern(2021/08-2021/09)

- Issei Matsumoto

- Research Intern(2022/9)

- Wen-Yu Chang

- Research Intern(2023/7-9)

Research results

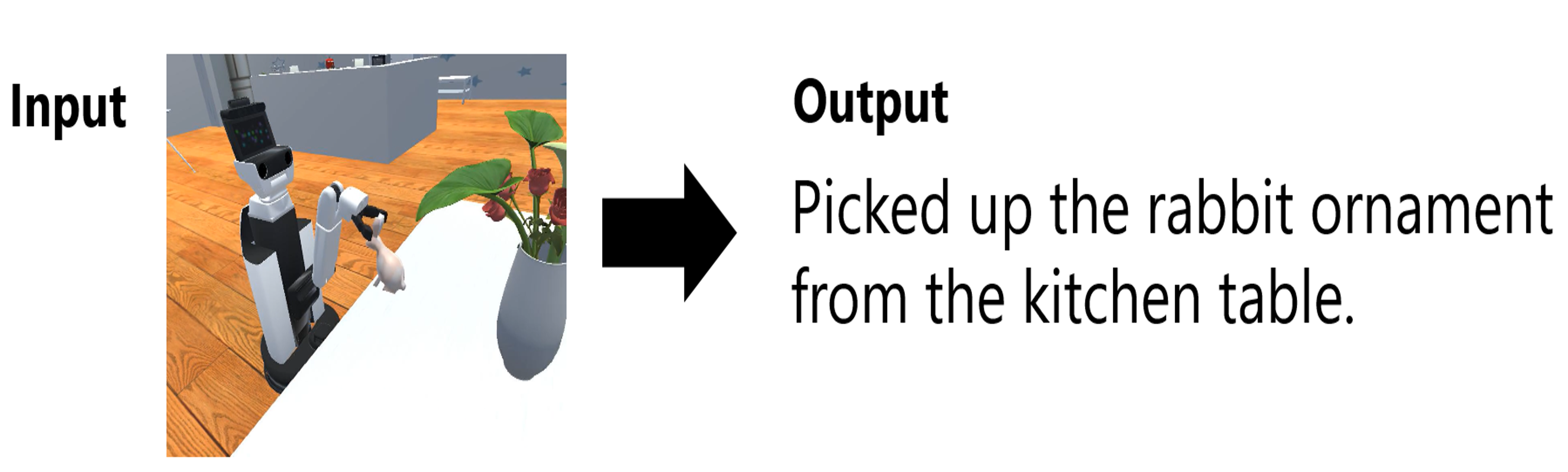

Explanation by the robot itself

We have developed a framework that allows a robot to explain what it has done in natural language. The robot can understand the actions it has taken and explain them in language using the trajectory of the robot's motors and first-person images. This technology improves the explainability of the robot's actions, and even when an action fails, the robot can explain what it tried to do and what happened as a result.

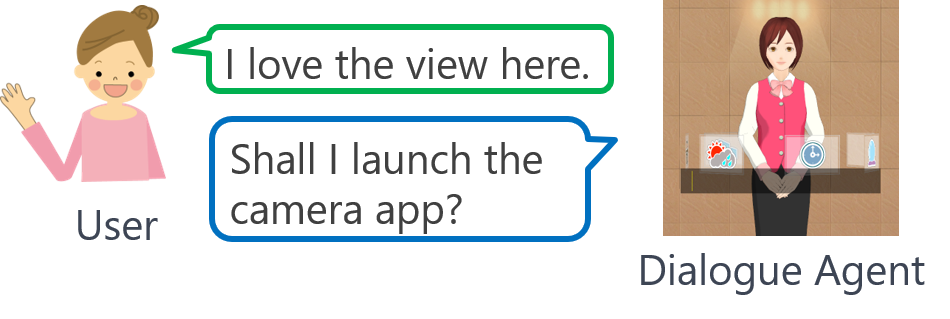

Dialogue system that makes witty recommendations.

Dialogue systems need to take appropriate actions not only for clear user requests such as “Please launch the camera app” but also for ambiguous and vague ones such as “I love the view here.” We built such a reflective dialogue system by collecting a corpus that includes pairs of ambiguous requests and corresponding reflective actions. A proposed Positive/Unlabeled (PU) learning method achieved higher classification performance than a conventional PU learning method.

Selected Publications

-

Takuma Miwa, Yusuke Oda, Seiya Kawano, Koichiro Yoshino

"Efficient Channel Generation for QCNN based on Multi-Pauli Matrices"

In Proceedings of International Conference on Quantum Communications, Networking, and Computing (QCNC), Nara, Japan, March 2025. -

Kai Yoshida, Masahiro Mizukami, Seiya Kawano, Canasai Kruenkrai, Hiroaki Sugiyama, Koichiro Yoshino

"Training Dialogue Systems by AI Feedback for Improving Overall Dialogue Impression"

In Proceedings of the 50th IEEE International Conference on Acoustics, Speech, and Signal Processing (ICASSP), Hyderabad, India, April 2025. -

Muhammad Baihaqi, Angel Garcia Contreras, Seiya Kawano, Koichiro Yoshino

"Rapport-Driven Virtual Agent: Rapport Building Dialogue Strategy for Improving User Experience at First Meeting"

In Proceedings of the 25th Interspeech Conference (INTERSPEECH), Kos, Greece, September 2024. -

Shun Inadumi, Seiya Kawano, Akishige Yuguchi, Yasutomo Kawanishi, Koichiro Yoshino

"A Gaze-grounded Visual Question Answering Dataset for Clarifying Ambiguous Questions"

In Proceedings of The 2024 Joint International Conference on Computational Linguistics, Language Resources and Evaluation (LREC-COLING), Torino, Italy, May 2024. -

Nobuhiro Ueda, Hideko Habe, Yoko Matsui, Akishige Yuguchi, Seiya Kawano, Yasutomo Kawanishi, Sadao Kurohashi, Koichiro Yoshino

"J-CRe3: A Japanese Conversation Dataset for Real-world Reference Resolution"

In Proceedings of The 2024 Joint International Conference on Computational Linguistics, Language Resources and Evaluation (LREC-COLING), Torino, Italy, May 2024. -

Shang-Chi Tsai, Seiya Kawano, Angel Garcia Contreras, Koichiro Yoshino, Yun-Nung Chen

"ASMR: Augmenting Life Scenario using Large Generative Models for Robotic Action Reflection"

In Proceedings of International Workshop on Spoken Dialogue Systems Technology (IWSDS) 2024, Sapporo, Japan, March. 2024, Best Paper Award. -

Shohei Tanaka, Konosuke Yamasaki, Akishige Yuguchi, Seiya Kawano, Satoshi Nakamura, Koichiro Yoshino.

"Do as I Demand, Not as I Say: A Dataset for Developing a Reflective Life Support Robot."

IEEE Access, Vol. 12, pp.11774-11784, January 2024. -

Koichiro Yoshino, Yun-Nung Chen, Paul Crook, Satwik Kottur, Jinchao Li, Behnam Hedayatnia, Seungwhan Moon, Zhengcong Fei, Zekang Li, Jinchao Zhang, Yang Feng, Jie Zhou, Seokhwan Kim, Yang Liu, Di Jin, Alexandros Papangelis, Karthik Gopalakrishnan, Dilek Hakkani-Tur, Babak Damavandi, Alborz Geramifard, Chiori Hori, Ankit Shah, Chen Zhang, Haizhou Li, Jo?o Sedoc, Luis F. D'Haro, Rafael Banchs and Alexander Rudnicky.

"Overview of the Tenth Dialog System Technology Challenge: DSTC10"

IEEE/ACM Transactions on Audio, Speech and Language Processing (TASLP), Vol. 32, pp.765-768, July 2023 -

Taiki Nakamura, Seiya Kawano, Akishige Yuguchi, Yasutomo Kawanishi and Koichiro Yoshino

"Operative Action Captioning for Estimating System Actions"

In Proceedings of 2023 IEEE International Conference on Robotics and Automation (ICRA2023), London, UK, May 2023. -

Seiya Kawano, Masahiro Mizukami, Koichiro Yoshino and Satoshi Nakamura

"Entrainable Neural Conversation Model based on Reinforcement Learning"

IEEE Access, Vol.8, pp.178283-178294, September 2020. -

Shohei Tanaka, Koichiro Yoshino, Katsuhito Sudoh, Satoshi Nakamura.

"Reflective action selection based on positive-unlabeled learning and causality detection model."

Computer Speech & Language, 101463. 2023. -

Seiya Kawano, Muteki Arioka, Akishige Yuguchi, Kenta Yamamoto, Koji Inoue, Tatsuya Kawahara, Satoshi Nakamura and Koichiro Yoshino.

"Multimodal Persuasive Dialogue Corpus using Teleoperated Android."

In Proceedings of INTERSPEECH2022, pp.2308-2312, Incheon, Korea, September 2022. -

Yuya Nakano, Seiya Kawano, Koichiro Yoshino, Katsuhito Sudoh and Satoshi Nakamura.

"Pseudo Ambiguous and Clarifying Questions Based on Sentence Structures Toward Clarifying Question Answering System."

In Proceedings of Annual Meeting of the Association for Computational Linguistics DialDoc Workshop, Dublin, Ireland, May 2022. -

Akishige Yuguchi, Seiya Kawano, Koichiro Yoshino, Carlos Ishi, Yasutomo Kawanishi, Yutaka Nakamura, Takashi Minato, Yasuki Saito and Michihiko Minoh.

"Butsukusa: A Conversational Mobile Robot Describing Its Own Observations and Internal States."

In Proceedings of 17th ACM/IEEE International Conference on Human-Robot Interaction, 2022. -

Koichiro Yoshino.

"Embodied Dialogue by Autonomous Robot."

The third workshop of natural language processing for conversational AI (NLP4ConvAI) at the 2021 Conference on Empirical Methods in Natural Language Processing (EMNLP2021); Invited Talk, 2021. -

Sara Asai, Seitaro Shinagawa, Koichiro Yoshino, Sakriani Sakti, Satoshi Nakamura.

"Eliciting Cooperative Persuasive Dialogue by Multimodal Emotional Robot."

International Workshop on Spoken Dialogue Systems Technology (IWSDS) 2021. -

Seiya Kawano, Koichiro Yoshino and Satoshi Nakamura.

"Controlled Neural Response Generation by Given Dialogue Acts Based on Label-aware Adversarial Learning"

Transaction of The Japanese Society for Artificial Intelligence,Vol.36, No.4, July 2021. -

Shohei Tanaka, Koichiro Yoshino, Katsuhito Sudoh and Satoshi Nakamura.

"ARTA: Collection and Classification of Ambiguous Requests and Thoughtful Actions"

Proceedings of the 22nd Annual Meeting of the Special Interest Group on Discourse and Dialogue (SIGDIAL 2021), Singapore, Singapore, July 2021 -

Seiya Kawano, Koichiro Yoshino, David Traum and Satoshi Nakamura.

"Dialogue Structure Parsing on Multi-Floor Dialogue Based on Multi-Task Learning"

In Proceedings of the first ROBOT-DIAL workshop at IJCAI 2020. -

Seiya Kawano, Masahiro Mizukami, Koichiro Yoshino and Satoshi Nakamura.

"Entrainable Neural Conversation Model based on Reinforcement Learning"

IEEE Access, Vol.8, pp.178283 - 178294, September 2020. -

Tung The Nguyen, Koichiro Yoshino, Sakriani Sakti and Satoshi Nakamura.:

"Policy Reuse for Dialog Management Using Action-Relation Probability"

IEEE Access, Vol.8, pp.159639-159649, 2020 -

Luis Fernando D'Haro, Koichiro Yoshino, Chiori Hori, Tim K. Marks, Lazaros Polymenakos, Jonathan K. Kummerfeld, Michel Galley, and Xiang Gao.:

"Overview of the seventh Dialog System Technology Challenge: DSTC7"

Computer Speech & Language, Volume 62, 2020 -

Seitaro Shinagawa, Koichiro Yoshino, Seyed Hossein Alavi, Kallirroi Georgila, David Traum, Sakriani Sakti, and Satoshi Nakamura.:

"An Interactive Image Editing System using an Uncertainty-based Confirmation Strategy"

IEEE Access, Vol.8, pp.98471 - 98480, 2020 -

The Tung Nguyen, Koichiro Yoshino, Sakriani Sakti, Satoshi Nakamura.:

"Dialog Management of Healthcare Consulting System by Utilizing Deceptive Information"

Transactions of the Japanese Society for Artificial Intelligence, Vol.35, No.1, pp.DSI-C_1-12, 2020 -

Nurul Lubis, Sakti Sakriani, Koichiro Yoshino, Satoshi Nakamura.:

"Positive Emotion Elicitation in Chat-based Dialogue Systems"

IEEE/ACM Transactions on Audio, Speech and Language Processing (TASLP), Vol.27, Issue.4, pp.866--877, April 2019 -

Koichiro Yoshino, Kana Ikeuchi, Katsuhito Sudoh, Satoshi Nakamura.:

"Improving Spoken Language Understanding by Wisdom of Crowds"

In Proceedings of The 28th International Conference on Computational Linguistics (COLING2020), Barcelona (virtual), Spain, December 2020 (accepted). -

Koichiro Yoshino, Kohei Wakimoto, Yuta Nishimura and Satoshi Nakamura.:

"Caption Generation of Robot Behaviors based on Unsupervised Learning of Action Segments"

In Proceedings of International Workshop on Spoken Dialogue Systems Technology (IWSDS) 2020, Madrid (virtual), Spain, Sep. 2020. -

Seiya Kawano, Koichiro Yoshino, Satoshi Nakamura.:

"Neural Conversation Model Controllable by Given Dialogue Act Based on Adversarial Learning and Label-aware Objective"

The 12th International Conference on Natural Language Generation (INLG2019), Tokyo, Japan, October 2019. -

Shohei Tanaka, Koichiro Yoshino, Katsuhito Sudoh, Satoshi Nakamura.:

"Conversational Response Re-ranking Based on Event Causality and Role Factored Tensor Event Embedding"

The 1st workshop on NLP for ConvAI workshop in ACL conference, Florence, Italy, July 2019

Links

Knowledge Acquisition and Dialogue Research Team(RIKEN)

Contact Information

koichiro.yoshino [at] riken.jp