Interactive Robot

Research Team

Research Summary

This team aims at developing a social robot that can naturally interact with humans and provide casual and modest support for human daily activities. We explore a mechanism underlies natural interaction and robot behaviors that subconsciously change human behaviors.

- Main Research Fields

- Human-Robot Interaction

- Keywords

-

- Humanoid

- Android robot

- Humanlike expression

- Natural interaction

- Non-verbal interaction

- Multimodal recognition and expression

- Research theme

-

- Modeling human behaviors in interaction and generating natural robot behavior

- Recognizing a state of human activity based on multimodal information

- Design of robot behaviors to lead humans’ subconscious behavioral change

Takashi Minato

History

- 2001

- Japan Science and Technology Agency (JST)

- 2002

- Osaka University

- 2006

- Japan Science and Technology Agency (JST)

- 2011

- Advanced Telecommunications Research Institute International (ATR)

- 2020

- RIKEN

Award

- 2016

- ATR Prize for excellent study

- 2020

- ATR Prize for excellent study

Members

- Carlos Toshinori Ishi

- Senior Scientist

- Kurima Sakai

- Research Scientist

- Takahisa Uchida

- Research Scientist

- Bowen Wu

- Special Postdoctoral Researcher

- Ayaka Fujii

- Postdoctoral Researcher

- Tomo Funayama

- Technical Staff I

- Yuka Nakayama

- Technical Staff I

- Takayuki Kanda

- Visiting Scientist

- Takamasa Iio

- Visiting Scientist

- Chaoran Liu

- Visiting Scientist

- Jiaqi Shi

- Visiting Scientist

- Taiken Shintani

- Visiting Scientist

- Zihan Lin

- Research Part-time Worker I and Student Trainee

- Shudai Deguchi

- Research Part-time Worker I and Student Trainee

- Naomi Uratani

- Administrative Part-time Worker I

- Taiki Yano

- Research Part-time Worker II and Student Trainee

- Haruto Ueno

- Research Part-time Worker II and Student Trainee

- Nabeela Khanum Khan

- Student Trainee

Former member

- Ryusuke Mikata

- Technical Staff I(2022/08-2023/07)

- Changzeng Fu

- Visiting Scientist(2022/5-2023/3)

- Alexander Diel

- Visiting Researcher(2022/07-2023/05)

- Houjian Guo

- Research Part-time Worker II and Student Trainee(2022/8-2024/3)

- Niina Okuda

- Research Part-time Worker II and Student Trainee(2023/06-2025/03)

- Rin Takahira

- Research Part-time Worker II and Student Trainee(2024/05-2025/03)

- Atsushi Toyoda

- Research Part-time Worker II and Student Trainee(2024/09-2025/03)

- Namika Taya

- Administrative Part-time Worker II and Student Trainee(2022/12-2024/3)

- Taichi Matsuoka

- Administrative Part-time Worker II and Student Trainee(2023/6-2024/3)

- Mayuka Yamasaki

- Administrative Part-time Worker II and Student Trainee(2023/06-2025/03)

- Minjeong Bae

- Administrative Part-time Worker II and Student Trainee(2023/06-2025/03)

- Yuki Hori

- Student Trainee (2020/08-2022/03)

- Simon Andreas Piorecki

- Student Trainee(2023/12-2024/08)

- Teruya Inoue

- Student Trainee(2024/06-2025/03)

- Kakeru Sakuma

- Research Intern(2023/9)

Research results

Upper body gestures

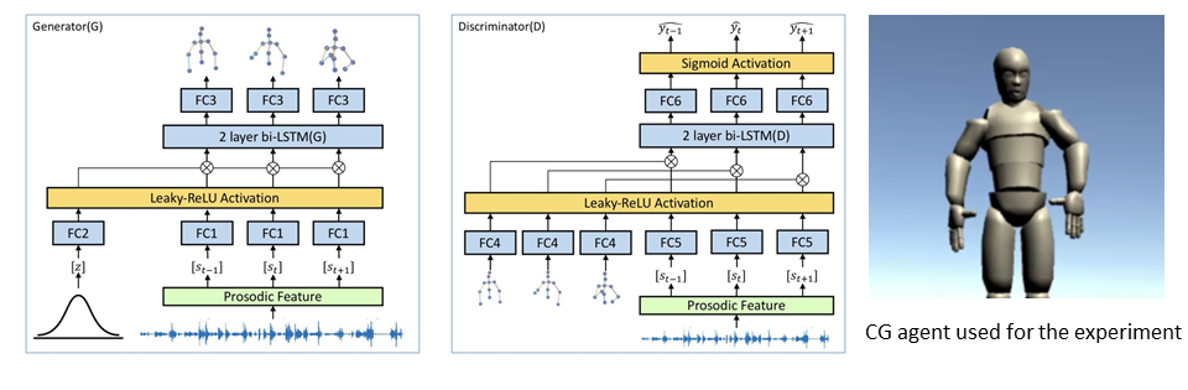

For the generation of natural robot movements in interaction, we are studying the generation of upper body gestures synchronized with the robot's voice. The Conditional GAN (Generative Adversarial Networks) model is trained based on the data of natural human gestures while speaking. This model can generate the body/hand gestures from the input speech signals. So far, we have implemented the model in a CG agent, and have shown that human-like gestures are generated through subject experiments.

Gaze control

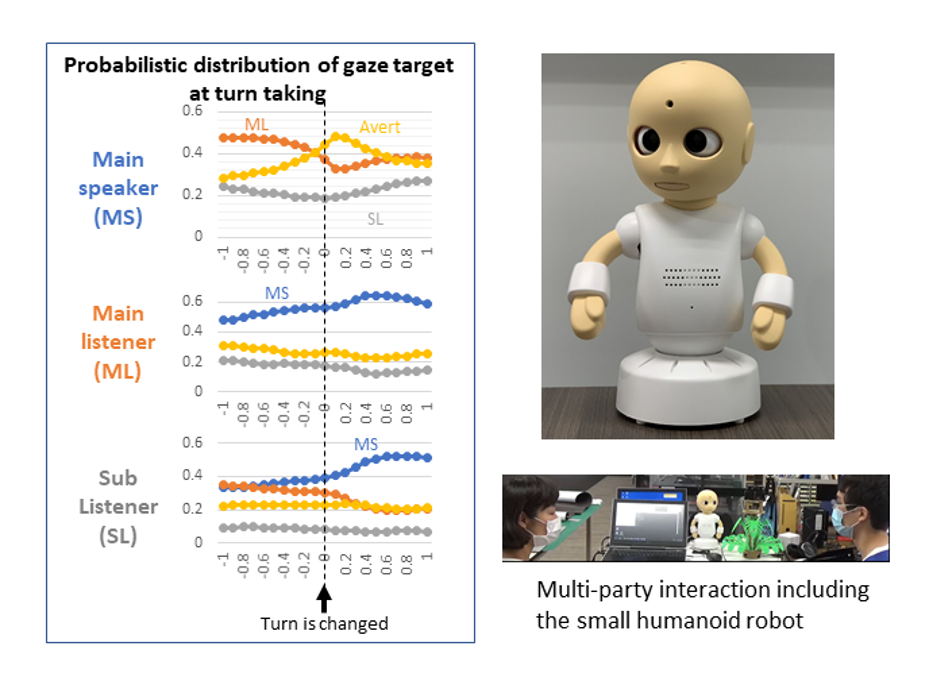

We are studying gaze control in face-to-face interactions between robots and multiple people to generate natural robot motions. Based on the data from multi-person dialogues, we modeled occurrence probabilities of eye contact, looking away, and the direction of looking away depending on the role of the dialogue (speaker, main listener, sub-listener, etc.), and developed a gaze behavior model. We implemented this model to control the gaze of a small robot, and through subjective experiments, we have shown that the model generates more human-like behavior than conventional methods.

Selected Publications

-

Taiken Shintani, Carlos T. Ishi, and Hiroshi Ishiguro

Gaze modeling in multi-party dialogues and extraversion expression through gaze aversion control

Advanced Robotics, Vol.38, Issue 19-20 (2024) -

Ird Ali Durrani, Chaoran Liu, Carlos T. Ishi, and Hiroshi Ishiguro

Is it possible to recognize a speaker without listening? Unraveling conversation dynamics in multi-party interactions using continuous eye gaze

IEEE Robotics and Automation Letters, Vol.9, Issue 11, pp.9923-9929 (2024) -

Kazuki Sakai, Koh Mitsuda, Yuichiro Yoshikawa, Ryuichiro Higashinaka, Takashi Minato, and Hiroshi Ishiguro

Effects of demonstrating consensus between robots to change user's opinion

International Journal of Social Robotics (2024) -

Takahisa Uchida, Takashi Minato, and Hiroshi Ishiguro

Opinion Attribution Improves Motivation to Exchange Subjective Opinions with Humanoid Robots

Frontiers in Robotics and AI, Vol.11, No.1175879 (2024) -

Alexander Diel, Wataru Sato, Chun-Ting Hsu, and Takashi Minato

Asynchrony enhances uncanniness in human, android, and virtual dynamic facial expressions

BMC Research Notes, Vol.16, No.368 (2023) -

Tian Ye, Takashi Minato, Kurima Sakai, Hidenobu Sumioka, Antonia Hamilton, and Hiroshi Ishiguro

Human-like interactions prompt people to take a robot's perspective

Frontiers in Psychology, Vol.14, No.1190620 (2023) -

Alexander Diel, Wataru Sato, Chun-Ting Hsu, and Takashi Minato

Differences in configural processing for human versus android dynamic facial expressions, Scientific Reports, Vol.13, No. 16952, 2023. -

Bowen Wu, Chaoran Liu, Carlos T. Ishi, Jiaqi Shi, and Hiroshi Ishiguro

Extrovert or introvert? GAN-based humanoid upper-body gesture generation for different impressions

International Journal of Social Robotics (2023) -

Alexander Diel, Wataru Sato, Chun-Ting Hsu, and Takashi Minato

The inversion effect on the cubic humanness-uncanniness relation in humanlike agents

Frontiers in Psychology, Vol.14, No.1222279, (2023) -

Carlos T. Ishi, Chaoran Liu, and Takashi Minato

An attention-based sound selective hearing support system: evaluation by subjects with age-related hearing loss

Proc. of IEEE/SICE International Symposium on System Integrations, 2023. -

Xinyue Li, Carlos T. Ishi, and Ryoko Hayashi

Prosodic and voice quality analyses of filled pauses in Japanese spontaneous conversation by Chinese learners and Japanese native speakers

Proc. of International Conference on Speech Prosody, 2022. -

Dongsheng Yang, Qianying Liu, Takashi Minato, Shushi Namba, and Shin'ya Nishida

Optimizing facial expressions of an android robot effectively: a Bayesian optimization approach

Proc. of IEEE-RAS International Conference on Humanoid Robots, 2022. -

Takashi Minato, Kurima Sakai, Takahisa Uchida, and Hiroshi Ishiguro

A study of interactive robot architecture through the practical implementation of conversational android

Frontiers in Robotics and AI, Vol.9, No.905030, 2022. -

Changzeng Fu, Chaoran Liu, Carlos T. Ishi, and Hiroshi Ishiguro

C-CycleTransGAN: A non-parallel controllable cross-gender voice conversion model with CycleGAN and Transformer

Proc. of Asia Pacific Signal and Information Processing Association Annual Summit and Conference, 2022. -

Taiken Shintani, Carlos T. Ishi, and Hiroshi Ishiguro

Expression of personality by gaze movements of an android robot in multi-party dialogues

Proc. of IEEE International Conference on Robot and Human Interactive Communication, 2022. -

Changzeng Fu, Chaoran Liu, Carlos T. Ishi, and Hiroshi Ishiguro

An adversarial training based speech emotion classifier with isolated Gaussian regularization

IEEE Transactions on Affective Computing, Vol.14, No.8, 2022. -

Bowen Wu, Jiaqi Shi, Chaoran Liu, Carlos T. Ishi, and Hiroshi Ishiguro

Controlling the impression of robots via GAN-based gesture generation

Proc. of IEEE/RSJ International Conference on Intelligent Robots and Systems, 2022. -

Wataru Sato, Shushi Namba, Dongsheng Yang, Shin'ya Nishida, Carlos T. Ishi, and Takashi Minato

An android for emotional interaction: Spatiotemporal validation of its facial expressions

Frontiers in Psychology, Vol.12, 2022. -

Jiaqi Shi, Chaoran Liu, Carlos T. Ishi, and Hiroshi Ishiguro

3D skeletal movement-enhanced emotion recognition networks

APSIPA Transactions on Signal and Information Processing, Vol.10, No.E12, 2021.

Links

Interactive Robot Research Team(RIKEN)

Contact Information

takashi.minato [at] riken.jp